I had tested a hello world web server in C, Erlang, Java and the Go programming language.

* C, use the well-known high performance web server nginx, with a hello world nginx module

* Erlang/OTP

* Java, using the MINA 2.0 framework, now the JBoss Netty framework.

* Go, http://golang.org/

1. Test environment

1.1 Hardware/OS

2 Linux boxes in a gigabit ethernet LAN, 1 server and 1 test client

Linux Centos 5.2 64bit

Intel(R) Xeon(R) CPU E5410 @ 2.33GHz (L2 cache: 6M), Quad-Core * 2

8G memory

SCSI disk (standalone disk, no other access)

1.2 Software version

nginx, nginx-0.7.63.tar.gz

Erlang, otp_src_R13B02-1.tar.gz

Java, jdk-6u17-linux-x64.bin, mina-2.0.0-RC1.tar.gz, netty-3.2.0.ALPHA1-dist.tar.bz2

Go, hg clone -r release https://go.googlecode.com/hg/ $GOROOT (Nov 12, 2009)

1.3 Source code and configuration

Linux, run sysctl -p

net.ipv4.ip_forward = 0 net.ipv4.conf.default.rp_filter = 1 net.ipv4.conf.default.accept_source_route = 0 kernel.sysrq = 0 kernel.core_uses_pid = 1 net.ipv4.tcp_syncookies = 1 kernel.msgmnb = 65536 kernel.msgmax = 65536 kernel.shmmax = 68719476736 kernel.shmall = 4294967296 kernel.panic = 1 net.ipv4.tcp_rmem = 8192 873800 8738000 net.ipv4.tcp_wmem = 4096 655360 6553600 net.ipv4.ip_local_port_range = 1024 65000 net.core.rmem_max = 16777216 net.core.wmem_max = 16777216

# ulimit -n

150000

C: ngnix hello world module, copy the code ngx_http_hello_module.c from https://timyang.net/web/nginx-module/

in nginx.conf, set “worker_processes 1; worker_connections 10240” for 1 cpu test, set “worker_processes 4; worker_connections 2048” for multi-core cpu test. Turn off all access or debug log in nginx.conf, as follows

worker_processes 1;

worker_rlimit_nofile 10240;

events {

worker_connections 10240;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 0;

server {

listen 8080;

server_name localhost;

location / {

root html;

index index.html index.htm;

}

location /hello {

ngx_hello_module;

hello 1234;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

}

}

$ taskset -c 1 ./nginx or $ taskset -c 1-7 ./nginx

Erlang hello world server

The source code is available at yufeng’s blog, see http://blog.yufeng.info/archives/105

Just copy the code after “cat ehttpd.erl”, and compile it.

$ erlc ehttpd.erl

$ taskset -c 1 erl +K true +h 99999 +P 99999 -smp enable +S 2:1 -s ehttpd

$ taskset -c 1-7 erl +K true -s ehttpd

We use taskset to limit erlang vm to use only 1 CPU/core or use all CPU cores. The 2nd line is run in single CPU mode, and the 3rd line is run in multi-core CPU mode.

Java source code, save the 2 class as HttpServer.java and HttpProtocolHandler.java, and do necessary import.

public class HttpServer {

public static void main(String[] args) throws Exception {

SocketAcceptor acceptor = new NioSocketAcceptor(4);

acceptor.setReuseAddress( true );

int port = 8080;

String hostname = null;

if (args.length > 1) {

hostname = args[0];

port = Integer.parseInt(args[1]);

}

// Bind

acceptor.setHandler(new HttpProtocolHandler());

if (hostname != null)

acceptor.bind(new InetSocketAddress(hostname, port));

else

acceptor.bind(new InetSocketAddress(port));

System.out.println("Listening on port " + port);

Thread.currentThread().join();

}

}

public class HttpProtocolHandler extends IoHandlerAdapter {

public void sessionCreated(IoSession session) {

session.getConfig().setIdleTime(IdleStatus.BOTH_IDLE, 10);

session.setAttribute(SslFilter.USE_NOTIFICATION);

}

public void sessionClosed(IoSession session) throws Exception {}

public void sessionOpened(IoSession session) throws Exception {}

public void sessionIdle(IoSession session, IdleStatus status) {}

public void exceptionCaught(IoSession session, Throwable cause) {

session.close(true);

}

static IoBuffer RESULT = null;

public static String HTTP_200 = "HTTP/1.1 200 OK\r\nContent-Length: 13\r\n\r\n" +

"hello world\r\n";

static {

RESULT = IoBuffer.allocate(32).setAutoExpand(true);

RESULT.put(HTTP_200.getBytes());

RESULT.flip();

}

public void messageReceived(IoSession session, Object message)

throws Exception {

if (message instanceof IoBuffer) {

IoBuffer buf = (IoBuffer) message;

int c = buf.get();

if (c == 'G' || c == 'g') {

session.write(RESULT.duplicate());

}

session.close(false);

}

}

}

Nov 24 update Because the above Mina code doesn’t parse HTTP request and handle the necessary HTTP protocol, replaced with org.jboss.netty.example.http.snoop.HttpServer from Netty example, but removed all the string builder code from HttpRequestHandler.messageReceived() and just return a “hello world” result in HttpRequestHandler.writeResponse(). Please read the source code and the Netty documentation for more information.

$ taskset -c 1-7 \

java -server -Xmx1024m -Xms1024m -XX:+UseConcMarkSweepGC -classpath . test.HttpServer 192.168.10.1 8080

We use taskset to limit java only use cpu1-7, and not use cpu0, because we want cpu0 dedicate for system call(Linux use CPU0 for network interruptions).

Go language, source code

package main

import (

"http";

"io";

)

func HelloServer(c *http.Conn, req *http.Request) {

io.WriteString(c, "hello, world!\n");

}

func main() {

runtime.GOMAXPROCS(8); // 8 cores

http.Handle("/", http.HandlerFunc(HelloServer));

err := http.ListenAndServe(":8080", nil);

if err != nil {

panic("ListenAndServe: ", err.String())

}

}

$ 6g httpd2.go

$ 6l httpd2.6

$ taskset -c 1-7 ./6.out

1.4 Performance test client

ApacheBench client, for 30, 100, 1,000, 5,000 concurrent threads

ab -c 30 -n 1000000 http://192.168.10.1:8080/

ab -c 100 -n 1000000 http://192.168.10.1:8080/

1000 thread, 334 from 3 different machine

ab -c 334 -n 334000 http://192.168.10.1:8080/

5000 thread, 1667 from 3 different machine

ab -c 1667 -n 334000 http://192.168.10.1:8080/

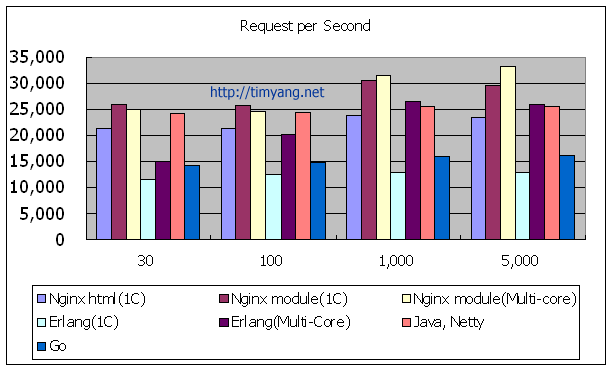

2. Test results

2.1 request per second

| 30 (threads) | 100 | 1,000 | 5,000 | |

| Nginx html(1C) | 21,301 | 21,331 | 23,746 | 23,502 |

| Nginx module(1C) | 25,809 | 25,735 | 30,380 | 29,667 |

| Nginx module(Multi-core) | 25,057 | 24,507 | 31,544 | 33,274 |

| Erlang(1C) | 11,585 | 12,367 | 12,852 | 12,815 |

| Erlang(Multi-Core) | 15,101 | 20,255 | 26,468 | 25,865 |

| Java, Mina2(without HTTP parse) |

30,631 | 26,846 | 31,911 | 31,653 |

| Java, Netty | 24,152 | 24,423 | 25,487 | 25,521 |

| Go | 14,080 | 14,748 | 15,799 | 16,110 |

2.2 latency, 99% requests within(ms)

| 30 | 100 | 1,000 | 5,000 | |

| Nginx html(1C) | 1 | 4 | 42 | 3,079 |

| Nginx module(1C) | 1 | 4 | 32 | 3,047 |

| Nginx module(Multi-core) | 1 | 6 | 205 | 3,036 |

| Erlang(1C) | 3 | 8 | 629 | 6,337 |

| Erlang(Multi-Core) | 2 | 7 | 223 | 3,084 |

| Java, Netty | 1 | 3 | 3 | 3,084 |

| Go | 26 | 33 | 47 | 9,005 |

3. Notes

* On large concurrent connections, C, Erlang, Java no big difference on their performance, results are very close.

* Java runs better on small connections, but the code in this test doesn’t parse the HTTP request header (the MINA code).

* Although Mr. Yu Feng (the Erlang guru in China) mentioned that Erlang performance better on single CPU(prevent context switch), but the result tells that Erlang has big latency(> 1S) under 1,000 or 5,000 connections.

* Go language is very close to Erlang, but still not good under heavy load (5,000 threads)

After redo 1,000 and 5,000 tests on Nov 18

* Nginx module is the winner on 5,000 concurrent requests.

* Although there is improvement space for Go, Go has the same performance from 30-5,000 threads.

* Erlang process is impressive on large concurrent request, still as good as nginx (5,000 threads).

4. Update Log

Nov 12, change nginx.conf work_connections from 1024 to 10240

Nov 13, add runtime.GOMAXPROCS(8); to go’s code, add sysctl -p env

Nov 18, realized that ApacheBench itself is a bottleneck under 1,000 or 5,000 threads, so use 3 clients from 3 different machines to redo all tests of 1,000 and 5,000 concurrent tests.

Nov 24, use Netty with full HTTP implementation to replace Mina 2 for the Java web server. Still very fast and low latency after added HTTP handle code.

erlang的没启用jit会慢很多 差不多是你测试的数据。

试试最新的go 的http server呢?

godoc里就有一个…

erlang版本的在我的测试里 延时非常小 大概是几十ms. 我觉得这个测试的瓶颈在网卡 因为网卡每秒要中断100K次

进一步验证了这个讨论

http://www.javaeye.com/topic/107476?page=1

是否添加更大并发下的测试结果?

@yufeng 用了taskset -c 1-7, 就是专门释放CPU0来处理网卡中断

If possible try redo the test on, 10 + cores.

@Stewart, i don’t have spare server with 10+ cores, and i think 8 core is enough, the bottleneck may not on CPU

go的futex竞争太厉害了

accept(3, {sa_family=AF_INET6, sin6_port=htons(55263), inet_pton(AF_INET6, “::ffff:192.168.235.142”, &sin6_addr), sin6_flowinfo=0, sin6_scope_id=0}, [28]) = 8

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

futex(0x80ca8ac, FUTEX_WAKE, 1) = 1

fcntl(8, F_SETFD, FD_CLOEXEC) = 0

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

futex(0x80ca8ac, FUTEX_WAKE, 1) = 1

fcntl(8, F_GETFL) = 0x2 (flags O_RDWR)

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

futex(0x80ca8ac, FUTEX_WAKE, 1) = 1

fcntl(8, F_SETFL, O_RDWR|O_NONBLOCK) = 0

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

futex(0x80ca8ac, FUTEX_WAKE, 1) = 1

setsockopt(8, SOL_TCP, TCP_NODELAY, [1], 4) = 0

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

futex(0x80ca8ac, FUTEX_WAKE, 1) = 1

futex(0x3f41d4, FUTEX_WAIT, 3, {1073741824, 0}) = 0

read(8, “GET / HTTP/1.0\r\nUser-Agent: Apac”…, 4096) = 95

write(8, “HTTP/1.0 200 OK\r\nContent-Type: t”…, 73) = 73

fcntl(8, F_GETFL) = 0x802 (flags O_RDWR|O_NONBLOCK)

fcntl(8, F_SETFL, O_RDWR) = 0

close(8) = 0

从系统调用和源码的实现中 看出go的goroutine的实现是 在os的线程跑 线程间通过 mutex保护的队列 来调度 goroutine.

// Go scheduler |./src/pkg/runtime/#proc.c#:19:// The go scheduler’s job is to match ready-to-run goroutines (`g’s)

// |./src/pkg/runtime/#proc.c#:128: // If main·init_function started other goroutines,

// The go scheduler’s job is to match ready-to-run goroutines (`g’s) |./src/pkg/runtime/#proc.c#:151: printf(“\ngoroutine %d:\n”, g->goid);

// with waiting-for-work schedulers (`m’s). If there are ready gs |./src/pkg/runtime/#proc.c#:279:// new goroutines.

// and no waiting ms, ready() will start a new m running in a new |./src/pkg/runtime/#proc.c#:299:// Get the next goroutine that m should run.

// OS thread, so that all ready gs can run simultaneously, up to a limit. |./src/pkg/runtime/#proc.c#:341: throw(“all goroutines are asleep – deadlock!”);

// For now, ms never go away. |./src/pkg/runtime/#proc.c#:353: throw(“bad m->nextg in nextgoroutine”);

// |./src/pkg/runtime/#proc.c#:411:// start looking for goroutines shortly.

// By default, Go keeps only one kernel thread (m) running user code |./src/pkg/runtime/#proc.c#:522:// The goroutine g is about to enter a system call.

// at a single time; other threads may be blocked in the operating system. |./src/pkg/runtime/#proc.c#:553:// The goroutine g exited its system call.

// Setting the environment variable $GOMAXPROCS or calling |./src/pkg/sort/sort.go:10:// sorted by the routines in this package. The methods require that the

// runtime.GOMAXPROCS() will change the number of user threads |Binary file ./src/pkg/sort/8.out matches

// allowed to execute simultaneously. $GOMAXPROCS is thus an |./src/pkg/strconv/atof_test.go:96: // The atof routines return NumErrors wrapping

// approximation of the maximum number of cores to use.

另外上面的测试 nginx 和erlang都做完整的http报文分析,这个需要时间哦。

Hi Tim,

Could you show us your nginx.conf for this benchmark? I think it’s a little bit unfair to nginx if you turned the CPU affinity switch (worker_cpu_affinity) off while other applications turned it on. Probably more tuning options could be used, I guess, such as deferred accept, accept_mutex, etc. BTW, it seems that your Java version server didn’t parse HTTP requests at all?

go没用到epoll事件派遣 还是accept read write close这样的串行化。

changed nginx.conf worker_connections 10240;

from 1024, nginx runs better under 5,000 threads

Data already updated.

Hi Tim,

Could you test Go by compiling with gccgo? It’d be interesting to see the results with a better optimising compiler. 6g was build for speed for compilation so doesn’t optimise as well AFAIK.

Thanks

ah sorry I glanced and saw quad-core, not the expected dual quad-core 😉

@Andrew from official Go FAQ, use gccgo is not encouraged,

“… Gccgo is a GCC front-end that can, with care, be linked with GCC-compiled C or C++ programs. However, because Go is garbage-collected it will be unwise to do so, at least naively. “,

and from go/src/doc/gccgo_install.html

Some Go features are not yet implemented in gccgo. As of 2009-11-06, the following are not implemented:

* Garbage collection is not implemented. There is no way to free memory. Thus long running programs are not supported.

* goroutines are implemented as NPTL threads with a fixed stack size. The number of goroutines that may be created at one time is limited.

Without GC and goroutines, I think The test for gccgo doesn’t make sense, few people will build their project on gccgo.

So does this show that the Go language is very scalable? Can it be tested to the point where Go begins to drop?

@Alex

FYI, Go test,

8,000 threads, 5632.55

10,000 threads, 5105.55

You need to run java with -server, right?

This test is far from fair. The Java code does not parse the incoming requests at all. It checks if the request starts with a “G” and simply writes the response. A language comparison should be done on a much lower level without layers and layers of library code involved.

How is your system tuned? As in how long are your connections in time_wait, what are your ephemeral port settings? These all contribute to potential performance issues.

How many cores was the Go example using? Did you try enabling the goroutines to be multiplexed across multiple cores?

You can use the GOMAXPROCS environment variable or see GOMAXPROCS in the runtime package. For an example of runtime usage see:

test/bench/spectral-norm-parallel.go

@jay, on amd64/x86_64, The default Java VM is server.

@Java, yes, java doesn’t parse HTTP, i will add parse code if possible.

@mark please see the new sysctl -p configuration in the post

@Chris thanks, runtime.GOMAXPROCS(nCPU); does make sense, the change is significant, already update go’s data.

It would be interesting if the numbers of CPU and memory consumption during the benchmark could be shown 🙂

to Chris Double: Go goroutine scheduler GOMAXPROCS

The go scheduler’s job is to match ready-to-run goroutines (`g’s)

If main·init_function started other goroutines, The go scheduler’s job is to match ready-to-run goroutines (`g’s) with waiting-for-work schedulers (`m’s). If there are ready gs and no waiting ms, ready() will start a new m running in a new OS thread, so that all ready gs can run simultaneously, up to a limit.

For now, ms never go away.

the limit is GOMAXPROCS. so It helps only when system full load.

GO 的那个还可以用 lockosthread来绑定cpu亲缘性

Maybe add a full functional Java powered web server test is more convincing, eg: Jetty http://www.mortbay.org/jetty/ + a HelloWorld Handler/Servlet

测试 Go 的话,应该用 gccgo 而不是用 6g 来 compile

6g / 8g 是用来测试 Go 码的可用性

gccgo 才是用来 compile 实用程序的 compiler

还有,可以用 goroutine 吗?

这比较能试到 Go 的主功能 concurrency 有多有效

先生、謝謝。

I introcuce your very cool benchmark in my Japanese site.

Thank you!